At Devox, we treat AI assessment as more than a technical review and call you upon that too. A serious AI strategy readiness evaluation answers three business questions and improves the chance of proper AI implementation. The reality is that 80% of AI projects fail because of weak data foundations, unclear ownership, over-optimistic use case selection, and workflow mismatch.

Devox Software specializes in AI-native preparation, so we’ve collected our hands-on insights into a holistic AI implementation readiness framework. It makes risks visible and gives CTOs a shared space to make safe decisions.

If Not Now, Then When?

In the market, AI adoption is accelerating, but scale is still the exception. 88% of respondents say their organizations now use AI regularly in at least one business function, yet only about one-third say their companies have begun scaling AI programs. High-performers are much more likely to redesign workflows, define human validation processes, and build around a strategy than hop right in the middle of the transformation.

That gap between experimentation and scale is precisely why an enterprise AI readiness evaluation matters. Worker access to AI rose by 50% in 2025, while productivity and efficiency remain the most commonly reported benefits. Furthermore, industries most exposed to AI achieved 3x higher growth in revenue per employee.

This brings us to the conclusion that companies benefit most not from AI itself but from its tuned implementation after the clearest readiness discipline.

AI Readiness Assessment Framework: What Do We Assess?

What is an AI readiness assessment framework in general? Simply put, it is a structured way to evaluate whether a company can adopt and profit from AI. For instance, consider whether the business case is strong enough, whether the underlying systems can support delivery, and whether the workflow is mature enough for adoption.

NIST’s AI Risk Management Framework advises looking at four functions: GOVERN, MAP, MEASURE, and MANAGE. Their approach spans across non-technical aspects: accountability, risk ownership, monitoring, and lifecycle control. So when leaders ask how to assess AI readiness in enterprises, the answer is simple—to assess value, delivery capability, operational fit, and governance at the same time.

For this purpose, we’ve prepared a practical AI adoption framework for business that works well for mid-size and enterprise environments. Earlier, we discussed how to choose a perfect AI vendor for your environment and needs; now, we’re going to unite the criteria with scores of artificial intelligence adoption: AI readiness at firm level.

| What to evaluate | Proof | Score | |

| Strategy and value | Clear use cases, business owner, KPI baseline, ROI hypothesis | Sponsor, target metric, prioritization logic | 0–5 |

| Data readiness | Data quality, access, labeling, governance, proprietary relevance | Trusted datasets, lineage, permissions, freshness rules | 0–5 |

| Technology and architecture | Integration points, APIs, cloud/on-prem fit, observability, MLOps needs | Architecture map, integration backlog, monitoring plan | 0–5 |

| Workflow fit | Where AI fits into real business processes and where human review is needed | Process map, approval logic, exception paths | 0–5 |

| People and capability | Skills, ownership, AI literacy, and an enablement plan | Named team, training plan, operating roles | 0–5 |

| Governance and risk | Security, privacy, model validation, policy, compliance | Risk register, guardrails, review process, audit trail | 0–5 |

| Operating model and scale | Funding, prioritization, delivery cadence, support model | Steering model, budget, roadmap, change process | 0–5 |

As a result, we see that strong AI outcomes are tied to leadership ownership, workflow redesign, talent strategy, technology, and data infrastructure, not tech alone. So let’s consider them in detail.

AI Readiness Scoring Framework and Template

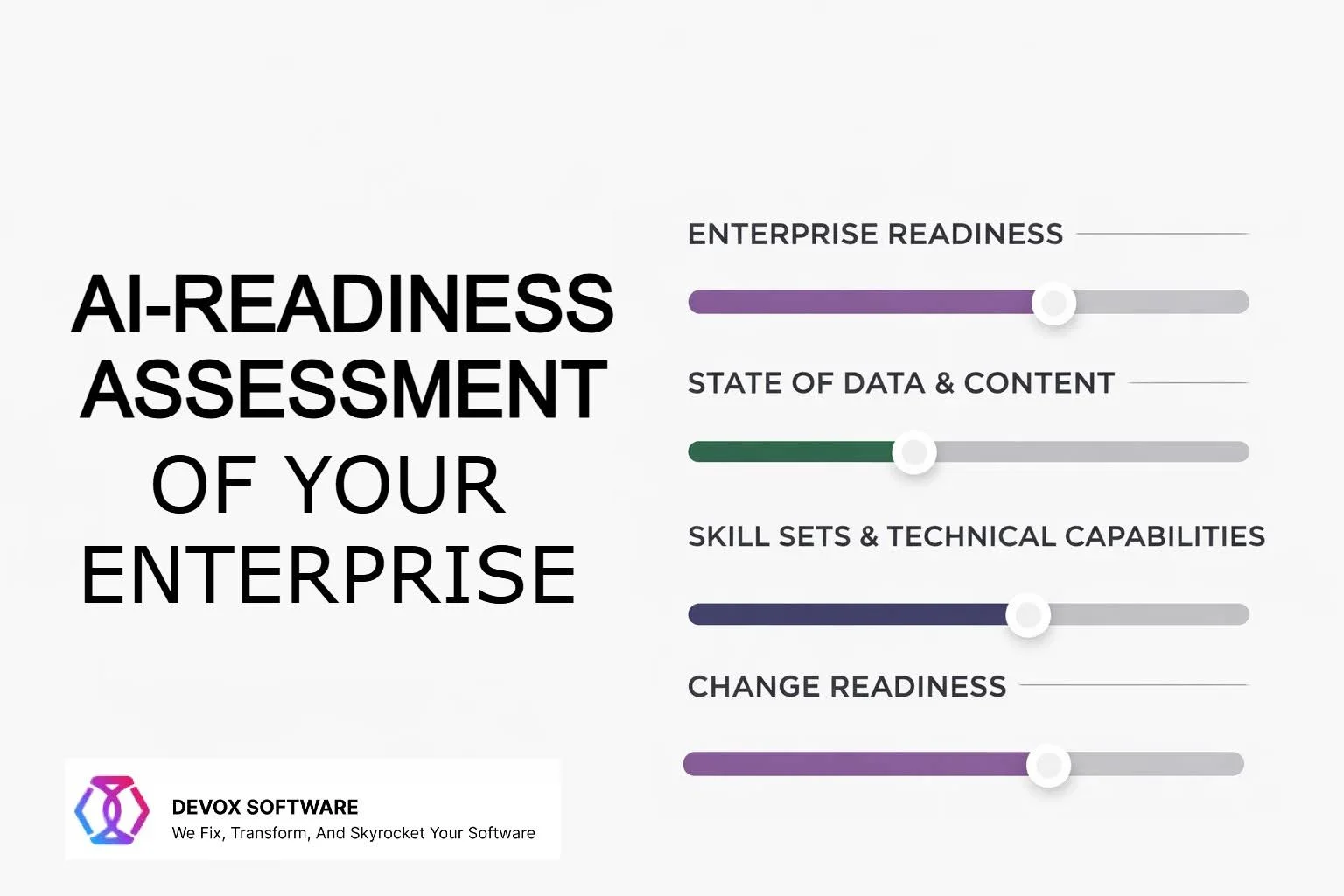

AI maturity assessment for enterprises, mainly, includes four patterns. Here’s their explanation.

Strategic Enterprise Readiness

Business frameworks for AI readiness evaluation should answer the following:

- AI should boost what business metrics?

- Which procedures benefit more from automation or prediction?

- Are there executive sponsorship and budget continuity?

In order not to turn AI into a solitary experiment without a strategy, companies must link AI cases to revenue, cost efficiency, or client retention to ensure AI output has a commercial value. For this, we’d recommend starting with quantifiable, repeatable activities like sales forecasting and client segmentation for rapid ROI.

State of Data and Content

Every AI model’s success rests on data. Low-quality, fragmented, or unavailable data undermine any project. That’s why a complete AI assessment includes the following:

- Data quality: comprehensive, accurate, consistent, timely

- Data accessibility: APIs, integrations, and permissions for data

- Data governance: ownership, compliance, documentation

- Infrastructure: storage, pipelines, and computational scalability

Through AI assessment, most companies find out unexpectedly that their largest gap is data discipline, not AI models. So start with one well-governed data domain: standardize, clean, and enhance before spreading.

Skillsets and Technical Capabilities

Furthermore, an effective AI readiness assessment is impossible without evaluating the following:

- Internal talent

- Non-technical team upskilling

- Data teams and business departments’ collaboration

- Leadership and interdepartmental governance

However, skills and leadership are not enough. Without good infrastructure, even the best-trained model fails. Therefore, assessing enterprise AI readiness should include steps as follows:

- AWS, Azure, GCP preparedness on cloud and computing

- MLOps capabilities: model CI/CD, versioning, monitoring

- Integration with APIs and legacy systems

- Data pipelines and security

Using serverless or containerized systems smooths deployment. Establish a scalable, compliant AI sandbox distinct from production.

Change Readiness

Often, legal and reputational problems arise from the ungoverned implementation of AI. This way, a mature corporate AI readiness evaluation should confirm the following:

- Data use ethics and algorithmic fairness

- Detecting and reducing bias

- GDPR, HIPAA, or local equivalents compliance

- AI output transparency and explainability

However, if people reject automation, the result may be tragic. For this reason, the AI readiness test should also assess communication and training plans and incentive alignment.

Enterprise AI Maturity Model

All four patterns combine into a practical AI readiness scoring framework that gives each of the seven dimensions a score from 0 to 5. A score below 2.0 indicates the company is not ready for production AI. It may be ready for narrow pilots with a 2.0–3.0 score. A 3.0–4.0 grade means the company can deploy regulated production use cases, while a multi-AI product is typical for enterprises above 4.0.

You can use this simple weighting model as an AI readiness assessment template:

- Strategy and value: 20%

- Data readiness: 20%

- Technology and architecture: 15%

- Workflow fit: 15%

- People and capability: 10%

- Governance and risk: 15%

- Operating model and scale: 5%

A common mistake is to give infrastructure too much credit when the business case and workflow are still weak.

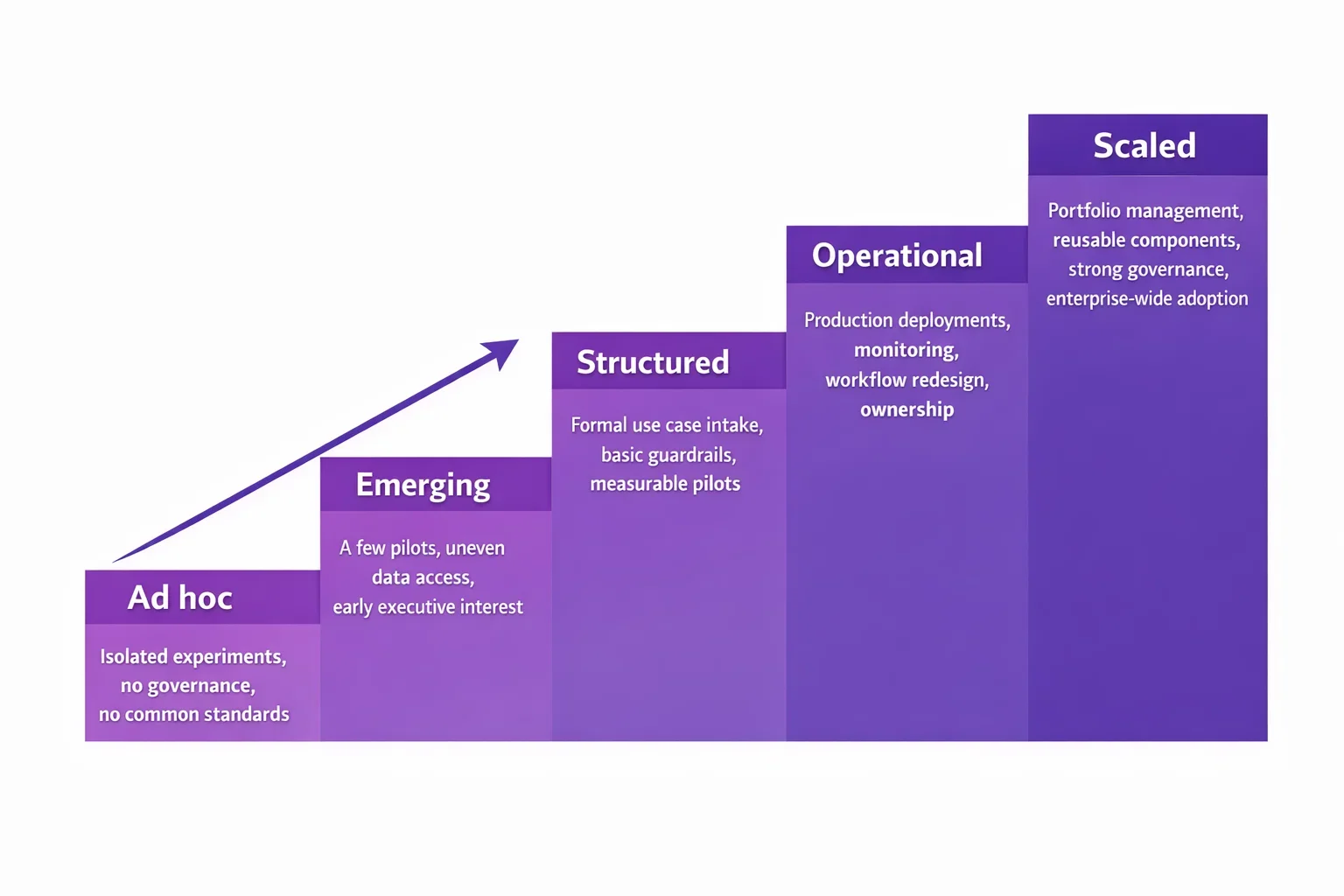

Stages of Enterprise AI Maturity Model

An artificial intelligence maturity model helps leadership understand not just current readiness but the current stage within the following spectre.

| Maturity stage | Description |

| Ad hoc | Isolated experiments, no governance, no common standards |

| Emerging | A few pilots, uneven data access, and early executive interest |

| Structured | Formal use case intake, basic guardrails, measurable pilots |

| Operational | Production deployments, monitoring, workflow redesign, ownership |

| Scaled | Portfolio management, reusable components, strong governance, enterprise-wide adoption |

The goal of an AI readiness assessment for enterprises is not to “reach Level 5” as fast as possible. The real goal is to know what you need to improve right now with the best effect.

Ready-to-Use Cloud-Based Artificial Intelligence Vendor Service vs. Custom AI

The major benefit of ready-to-use cloud-based artificial intelligence is speed-to-value. Majr vendors offer various solutions:

- The AWS Managed AI services and pre-trained models greatly lower the amount of resources needed for machine learning tasks.

- Microsoft’s Foundry Tools offer fully managed, scalable, prebuilt AI capabilities with a lower barrier to entry than bespoke machine learning.

- Google’s Vertex AI is a fully managed platform with access to 200+ models and tools, ranging from experimentation to implementation.

However, good old custom development is still on the verge. So, how to choose? Let’s assess options.

| Ready-to-use cloud-based artificial intelligence vendor service | Custom AI solution | |

| Time to first value | Faster | Slower |

| Upfront data requirement | Lower | Higher |

| Differentiation | Moderate | Higher |

| Operational burden | Lower | Higher |

| Compliance tailoring | Limited to platform options | Stronger control |

| Best fit | Common tasks like extraction, summarization, translation, and support automation | Core IP, proprietary workflows, domain-specific reasoning |

To sum up, a ready-to-use cloud-based artificial intelligence AI vendor service is often the right first move when the task is common and repetitive: document extraction, translation, summarization, etc., while a custom solution is tailored to specific company processes, strict rules, and unique information. It makes a ready-to-use cloud-based artificial intelligence vendor service best suited for the initial phase of implementation.

How to Assess AI Readiness in Enterprises: Step by Step

Just to synthesize, here is a practical, gradual AI readiness checklist for CTOs:

- Define one business outcome first. Start with one measurable target: reduce handling time, increase sales conversion, or improve forecast accuracy.

- Map the workflow before the model. Define the process, exceptions, handoffs, and approval logic before choosing a model.

- Audit the data reality. Verify data. It should be accurate, accessible, permissioned, current, and relevant to the problem.

- Decide build vs. buy. Use prebuilt cloud AI when the task is standard and speed matters.

- Design governance before deployment. Governance has to include ownership, validation, monitoring, and risk handling from the start.

- Score readiness honestly. Run the AI readiness scoring framework across all patterns and score them.

- Launch a controlled pilot with production intent. Define a KPI, owner, rollback path, human oversight rule, and adoption metric.

AI Readiness Assessment Best Practices

While we’re heading towards a conclusion, let’s stipulate the parts that affect the result the most. Models don’t equal readiness to use them. Treating AI as a side project when it actually changes everything is a lame decision. Tie every use case to a KPI. Score data and workflow fit before architecture changes. Use managed services when they solve the problem. Incorporate governance into the design phase, not just the aftermath.

The same logic can extend beyond core operations into HR, L&D, and even AI-ready formative assessments for internal upskilling.

Conclusion

Firstly, an enterprise does not become AI-ready because it bought access to a model. A business value, workflow design, data quality, governance, talent, and delivery ownership makes the most part of the successful AI implementation. That is the real purpose of an AI readiness assessment framework.

Secondly, the strongest enterprises use readiness assessment to make three decisions well: where AI should be applied, where it should not, and what needs to be fixed before scale. That is how AI moves from isolated pilots to measurable operating impact.

Devox Software serves enterprises across industries to implement the AI models and change how businesses see AI-powered features in their business structure. We show how AI can drive real impact on a company’s revenue and performance, paving the way for future development.